AI working within norms, AI working on norms

Rethinking AI tasks beyond "objective" and "subjective"

A common way to think about AI’s potential applications across different domains is to divide tasks into “objective” and “subjective” buckets.

Here are the associations that I feel cluster around each bucket:

Objective: has a ground truth, has a “right answer”, verifiable, stable, consistent, independent of opinion; examples include math problems, scientific questions with closed-form answers, coding problems with robust test cases

Subjective: open-ended, opinion-dependent, pluralistic, a matter of taste or vibes, difficult or impossible to pin down; examples include creative work like poetry or fiction, philosophical questions, judgments of “good writing” or “good taste”

These associations matter because they directly inform what we think we can do with “AI for x” (where x is an application domain like math, writing, science, coding, …):

If x is objective: train on ground truth data, apply RL with verifiable rewards, benchmark aggressively, scale confidently

If x is subjective: model the mean or distribution of outputs, do user modeling (condition outputs on user properties), measure diversity and bias, pursue pluralistic alignment by representing a range of perspectives

Clearly, this dichotomy exists because it is useful, and we all know that the divide between “objective” and “subjective” is fuzzy. So, my aim in this blogpost is not to “dismantle” this dichotomy.

Instead, I want to more positively illustrate what is fuzzy about the boundary between “objective” and “subjective” AI tasks and how that might lead us to a revised understanding of what it means for AI tasks to be “objective”, “subjective”, or something else. Hopefully, this revised understanding will allow us to think more creatively about building AI for a variety of applications.

This blogpost will follow these three steps:

Many so-called “subjective” tasks have a rational structure which have stable standards that can define meaningful sense of progress, which we might have otherwise thought of as a distinctive characteristic of “objective” tasks

Many so-called “objective” tasks have ongoing normative work to do, engaging with values, opinions, and other “social factors”, which we might have otherwise thought of as a distinctive characteristic of “subjective” tasks

From the first two points, we can propose to think of different tasks as varying in shades of “intersubjective rationality”: a social process in which people set forth, commit themselves to, and revise norms — rationally structured standards that define what knowledge, error, etc. looks like. Designing “AI for x” from the point-of-view of intersubjective rationality requires both modeling the norms themselves (the “rules of the game”, work within norms) as well as modeling and supporting the revision and evolution of these shared norms (evaluating and changing the rules of the game, work on norms).

None of these points are really that novel, and I think that lots of AI and human-AI researchers are aware of them, but I want to describe them in a more explicit and unified way with some wide-ranging examples that I have found compelling.

I think that getting clear on these terms can help us build AI models and interfaces that better address known failure modes of human-AI interactions, including sycophancy and disempowerment.

1. “Subjective” tasks have rational structure

Empirical knowledge is rational, not because it has a foundation but because it is a self-correcting enterprise which can put any claim in jeopardy, though not all at once. —Wilfred Sellars

One aspect of the perceived objective/subjective distinction I want to focus on and challenge in this section is this:

Objective: has a rational structure; progress is measurable against fixed, foundational standards

Subjective: does not have a rational structure; no stable standards, so no meaningful sense of progress

My claim in this section is that many “subjective” tasks do have standards — but those standards are often revisable rather than fixed (unlike the rules of math today), and rooted in coherence rather than grounded in the world (unlike the way physics is done in principle). Because we expect standards to look like the ones we find in math or physics, we tend not to see them when they show up in different forms, and the task looks standard-less.

“Subjective” carries a connotation of directionlessness, of “anything goes”. When someone dismisses a question as subjective, they often mean: there is no point thinking hard about this, because we cannot make progress toward a truer answer. It's a he-said-she-said game. In the end it’s just opinions, bits of hot air floating around.

If that’s true of some task x, then all “AI for x” can do is collect this hot air and represent it. That is, we can model what people say and what kinds of people (all the way from cultures to individuals) say what things.

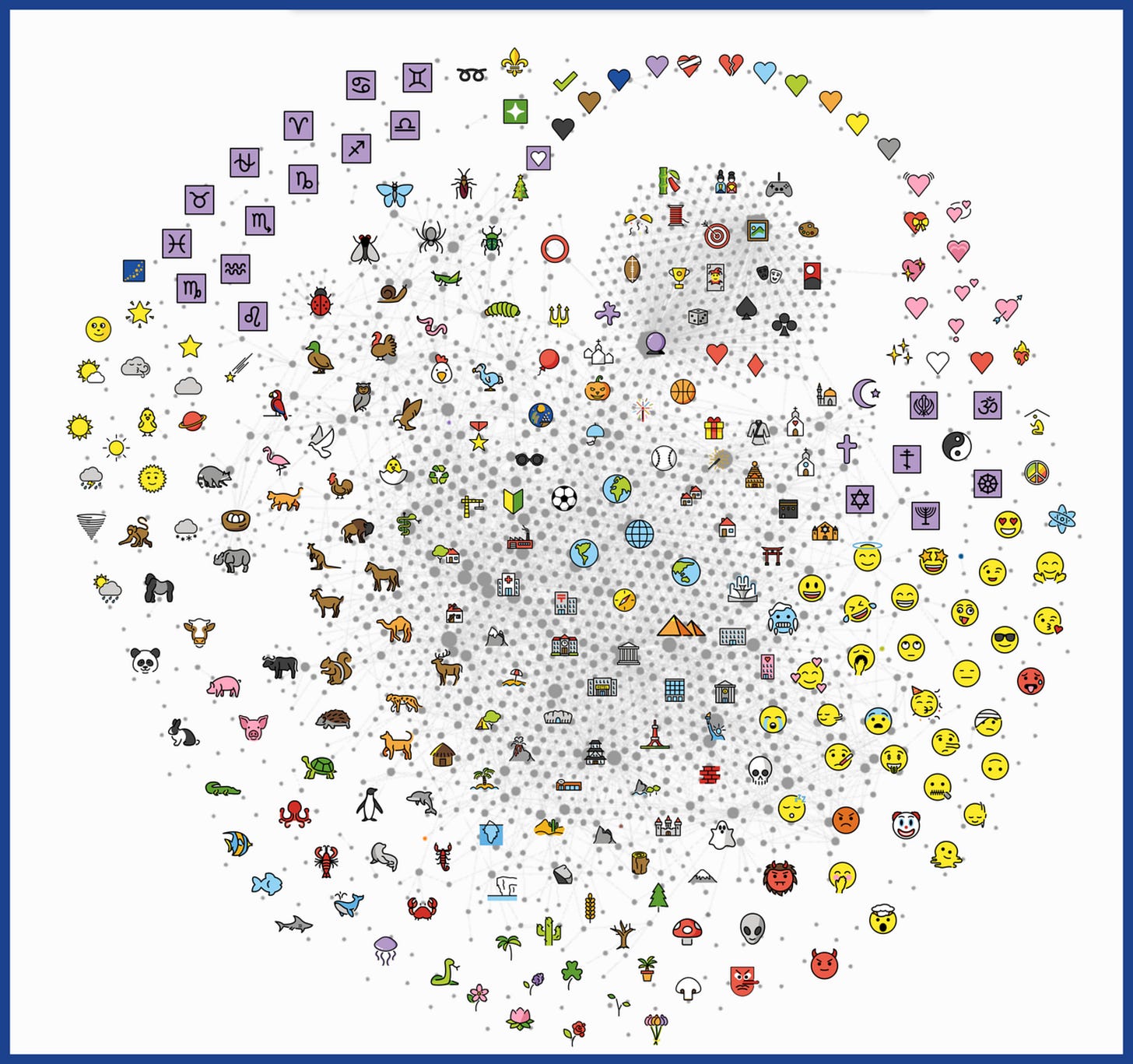

To be clear, this can be an important thing to do. Multicultural fairness research, for example, shows that AI tends to represent only some worldviews well — usually Western/American ones — and we would certainly want AI to represent many cultures and worldviews. As another example, with user modeling, we can build personalized AI that understands our individual quirks and style, so we don’t have to continually readjust a default AI to the kinds of ‘hot air’ we like.

But notice what we might end up believing if we stop at this level: that there is no more rational thing to do with these outputs than represent them (i.e., that we can’t judge them, mark some as more right than others, give reasons for and against them, etc.) That it's he-said-she-said all the way down.

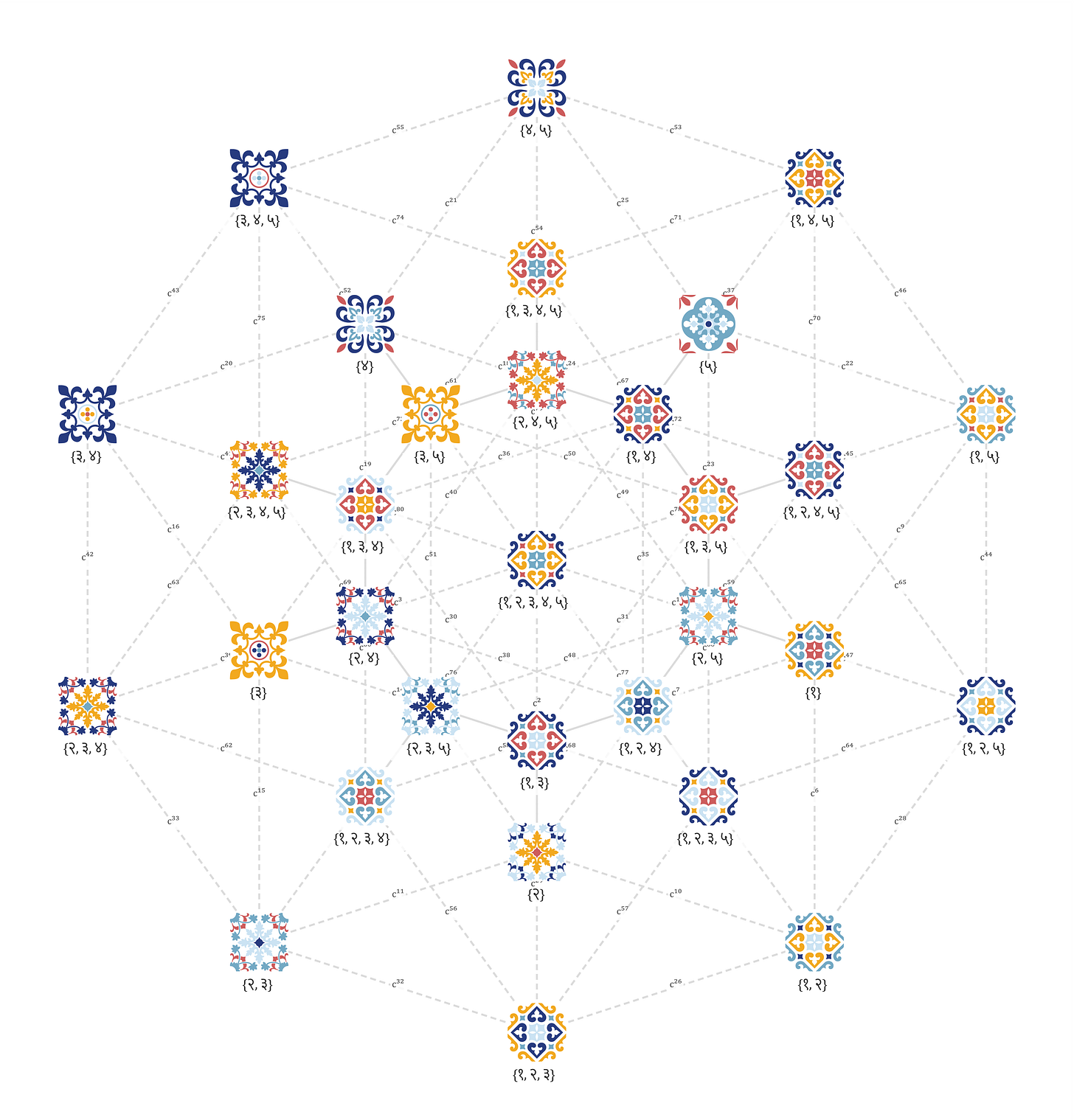

For example, this was generally the approach taken by an influential position paper on pluralistic alignment I was involved with. The paper proposed three ways models could be pluralistic: by outputting all reasonable answers to a question, by allowing users to steer models to behave in one of many ways, and by reproducing the real-world distribution of outputs. In this conception of pluralistic alignment, we are focused on different ways of covering pluralistic outputs, and less concerned with judging the the outputs themselves.

Now consider another kind of question: “Do we have free will?” We might be tempted to treat it the same way — some people say yes, some say no, so we can model who says what. But this approach would be attending to the wrong unit. The units that matter here are not positions but arguments. You don’t (or shouldn’t) believe in or against free will as a matter of hot air, a personal taste or style — you believe it because you find certain reasons for it more compelling than reasons against it. While it may be hard to judge positions as better or worse in isolation, we can certainly evaluate arguments. Consider two:

Argument A: We have free will because when I decide to do something — to raise my hand, to speak — I directly experience myself as the author of that action. This experience of authorship is undeniable. Therefore, free will exists.

Argument B: Even if every physical event in the universe is causally determined by prior events, this doesn’t threaten the kind of freedom that actually matters to us. What we care about when we care about free will is not some mysterious ability to escape causation, but the ability to act on our own reasons and values without external compulsion. A person who acts from their own deliberation, without coercion, is free in every sense that matters — regardless of whether their neurons were, at some level, following physical laws.

I think most people would say argument B is better than argument A, even though both conclude that we have free will. Argument A raises an interesting point (that our experience of authorship matters to what we mean by free will) but avoids doing the necessary work of tightly explaining how it leads to a conclusion: it just asserts that this experience is "undeniable", and assumes without explanation that the experience implies free will exists. Argument B is more aware of what it's trying to do. It identifies a specific worry in the free will debate (that determinism threatens freedom), distinguishes what we actually care about from what we don't, and gives reasons for why determinism doesn't threaten the thing that matters.

This example shows that even on a question that looks paradigmatically subjective, we can do more than model who believes what. We can judge arguments by how they got where they got — by standards of reasoning that let us say more than “people disagree.”

Another brief example: suppose that you go and ask a therapist if you should break up with your partner. After thinking for a long time, the therapist says, “As a pluralistically aligned therapist, there are several options: you could break up with your partner, or you could not.” This is clearly un-useful, because we want reasons for and against each of the options. We want to be able to judge the options because we need to act on them. (To be clear, I am not criticizing pluralistic alignment as an aim; rather I am targeting just the most basic form that is concerned only with outputs and not normatively characterizing outputs.)

Objection: but people disagree about these questions forever (like about free will). Professional philosophers are divided on most major philosophical questions. Doesn’t persistent disagreement show there’s no rational basis here?

David Chalmers, in “Why isn’t there more progress in philosophy?”, offers a useful response: premise deniability. Any philosophical argument rests on premises that a reasonable person could deny, and denying a premise lets you deny the conclusion. So, philosophical debates can persist without being arbitrary — the disagreement gets located in the premises, and the premises themselves can be examined, argued for, and argued against.

But perhaps this is not so different from what mathematicians do. Take the axiom of choice. It both “seems right” and “seems wrong” depending on which intuitions you consult, but mathematicians (to my knowledge) don’t spend their time fretting about picking a side. Instead, they explore what mathematics looks like with the axiom and what it looks like without, which is a process that all mathematicians can follow along. Algebra seems to work this way too: you posit properties of some object, see what they imply, and study how the resulting structure relates to other structures that are more general, more specific, or independent of it.

The point is that many tasks we think of as subjective — and thus treat as bags of outputs to be modeled — actually have rational processes by which people arrive at those outputs. Often, rational standards (measurements for progress, etc.) apply to these processes. We have standards for what counts as a good argument, for which premises are worth interrogating, for which consequences are worth tracing — and these standards apply to how someone reasons, not to the answer they end up with. Modeling only the outputs obscures this.

…to treat a plan — or any other form of prescriptive representation — as a specification for a course of action shuts down precisely the space of inquiry that begs for investigation; that is, the relations between an ordering device and the contingent labors through which it is produced and made reflexively accountable to ongoing activity. —Lucy Suchman

This same point applies to writing, which AI and human-AI interaction work often treats as a quintessentially “subjective” task. But if we broaden our view from isolated people producing isolated pieces of writing toward societies and cultures of writers and readers, we can make use of a useful concept from literary criticism: the canon, a body of works regarded as essential to understanding a literary culture or tradition. It comes from the Greek kanōn — a straight rod or rule — implying a standard of measurement. A canon is a set of exemplars by which we orient and evaluate work.

For example, to understand 19th-century American literature, one should familiarize themselves with its canon: Melville, Hawthorne, Whitman, Twain, and others. A canon is not just "what people liked" — it is a set of values made concrete in texts: values about individualism, nature, morality, the relationship between the self and society. (To be clear: these were values that some people liked, held, and promoted, for reasons that went beyond personal taste, and that gained traction for institutional and historical reasons.)

But canons change over time, not because taste changes on whims, but because arguments were made. Feminist critics showed systematically how women writers had been excluded and how their work engaged with and pushed against the male literary tradition. Postcolonial critics showed how Western literary canons had participated in constructing and justifying colonial power. These were not just expressions of preference, but arguments about what literature is for, whose experiences count as universal, what standards of measurement we should use. Accordingly, the canon changed: a new body of work entered syllabi not (just) because a generation of students happened to like them more, but because a set of arguments about value and representation won out. That is progress, not toward a fixed truth, but through the negotiation of standards as society changes.

There are two ways to interpret what is going on here:

“It’s all subjective”: some people like this literature, some people like that literature. The notion of a canon is an attempt to impose something objective on something fundamentally subjective, and debates about the canon are just different taste communities asserting themselves.

“People are debating norms of measurement”: people think different things matter about literature — formal innovation, social critique, historical representation, moral depth — and they argue about those priorities, make cases for them, and sometimes persuade each other.

If you hold the first view, AI for literature can only model taste and "hot air". If you hold the second — which I do — then writing looks a lot more like philosophy: producing good writing requires negotiating over standards of measurement, those standards reflect values, and the negotiation is irreducibly social. We come together around canons, argue about them, and revise them.

(I also think that this is a foundational point in the social sciences and humanities. If there were no case for the second view, what scholarly things would there be to say about human behavior, human artistry, human events, beyond just that they existed and there may have been many different ones?)

To close on a concrete note: I want to make a case for AI that models canons, processes, and arguments, rather than only modeling outputs (whether single outputs or distributions of outputs). Hyper-personalized AIs risk being sycophantic and disempowering — we develop unhealthy reliances on those AI’s that cut us off from viewpoints outside what we perceive to be our own. “Pluralistically aligned” AIs that only produce a medley of different outputs risk “bothsidesism”, overwhelming people with views that have been cut off from the substantive value commitments and judgments that gave those views their meaning in the first place.

We need AI that models the rational structure of “canons” — whether canons of writing, canons of philosophical thought, or anything else — and that engages you within them or helps you challenge them. We need AI that can articulate the commitments behind a plurality of canons, traditions, and ways of doing things, and say something about why you should or shouldn’t hold those commitments yourself.

2. “Objective” tasks have to do ongoing normative work

Discovery consists precisely in not constructing useless combinations, but in constructing those that are useful, which are an infinitely small minority. Discovery is discernment, selection. — Henri Poincaré

The aspect of the perceived objective/subjective distinction I want to focus on and challenge in this section is this:

Objective: values and opinions are external to the task — they might affect who pursues it, but not what counts as doing it well

Subjective: values and opinions are internal to the task — they shape what counts as doing it well in the first place

My claim is that "objective" tasks involve ongoing normative work too. When we call a task objective, we are recognizing that it has a clear rational structure — clear standards for progress, clear standards for error. But that rational structure is itself the product of normative choices that had to be made, and continues to depend on normative choices that practitioners go on making. There are few to no cases of “objective” inquiry (that we care about) where the value-laden work is finished and we can compute out the rest. (This isn’t to say AI/computation can’t help with the value-laden work — I think it can, and one of the goals of the essay is to gesture at how.)

This is a point that has been made carefully and extensively in the history and philosophy of science, in science and technology studies, in the philosophy of mathematics, and elsewhere. I just recapitulate it here, with an eye toward what it means for AI.

Let’s begin by talking more about mathematics, which is a poster child “objective task” in AI:

Consider the following slightly coarse retelling of mathematical history:

In his Elements (c. 300 BCE), Euclid set out five axioms of geometry. The first four were elementary/self-evident, like “A straight line may be drawn between any two points.” It would be very, very difficult to imagine any notion of geometry that did not satisfy these axioms. The fifth — the parallel postulate — seemed different:

If two straight lines in a plane are met by another line, and if the sum of the internal angles on one side is less than two right angles, then the straight lines will meet if extended sufficiently on the side on which the sum of the angles is less than two right angles.

…or equivalently:

for any line and any point not on that line, there is exactly one line through the point that does not meet the original line.

Compared to the others, the parallel postulate did not feel self-evident. Generations of mathematicians felt it shouldn’t be a postulate at all, that it should be derivable from the other four. They tried for centuries and failed.

In the early nineteenth century, two mathematicians — János Bolyai and Nikolai Lobachevsky, working independently — took a different approach. Rather than try to derive the parallel postulate, they asked what would happen if you replaced it with a contradicting alternative: a geometry in which, through a given point not on a line, there are many lines parallel to the given line. They found/developed a self-consistent geometry (what we now call hyperbolic geometry). Around the same time, others were developing elliptic geometry, in which there are no parallel lines through such a point.

A few decades later, Bernhard Riemann reformulated all of this in terms of curvature, showing that Euclidean, hyperbolic, and elliptic geometries are all special cases of a more general framework. When curvature is constant and negative, we have hyperbolic geometry; when curvature is constant and positive, we have elliptic geometry; when curvature is zero, we have Euclidean geometry.

However, in more general Riemann manifolds, the curvature can vary from point to point. Einstein would later model spacetime as a 4-dimensional Riemann manifold with varying curvature.

This is a story of remarkable mathematical progress on our understandings of geometry. It is an interesting exercise to think about if the way we are training AI to do math could do something similar. In this story, progress did not come about by plodding forth and proving true things. It followed a sequence of human impulses and value-laden judgments: a collective intuition that the parallel postulate was suspect and worth interrogating; the willingness to take seriously a geometry that contradicted everyday spatial common sense; the drive toward a unifying framework that could explain many geometries with one.

There are two things to take note of here.

Firstly, the form of modern mathematical practice — proving things from axioms within a formal system — was itself historically contested and an achievement in itself. Curiously, Henri Poincaré, one of the most influential mathematicians of the late nineteenth century, was deeply skeptical of the move toward pure formalization championed by David Hilbert. For Poincaré, mathematical intuition was not a stepping stone to be replaced by formal proof / symbol manipulation, but the heart of mathematical truth, insight, understanding.

Does understanding the demonstration of a theorem consist in examining each of the syllogisms of which it is composed... As long as they appear to them engendered by caprice, and not by an intelligence constantly conscious of the end to be attained, they do not think they have understood. — Henri Poincaré

Formalization won the historical argument and now underlies technologies like proof assistants and autoformalization efforts like Lean, which are now being hooked up to AI in autoformalization and RLVR training setups. But the question Poincaré was pressing was a normative one: what makes mathematical work (presumably, human) understanding rather than ‘mere derivation’?

This brings us to the second point. Even within an established formal paradigm, mathematicians have to decide which true things are worth proving, what questions are worth investigating. There are simply too many true statements (1+1=2, 1+2=3, …), but many of them are not interesting. These decisions are normative.

Imre Lakatos’s Proofs and Refutations is the classic treatment of this kind of normative work in mathematics. Lakatos’s central case study is Euler’s formula for polyhedra: V − E + F = 2. Lakatos details a repeating dialogical process in which the formula gets stated, “proved,” challenged by a counterexample (some unanticipated “polyhedron”, e.g. a polyedron a hole in it), re-examined to find the hidden assumption the counterexample violates, and restated to build that assumption in (but ideally not to assume so much as to make the theorem too narrow and uninteresting). The proof, the theorem, and the concept of “polyhedron” itself all evolve together through this back-and-forth. Lakatos calls the work of re-examining a proof in light of counterexamples “proof-analysis” and distinguishes it from “proof” itself. His point is that the line between them — between what we treat as settled justification and what we treat as still open to criticism — is itself a normative activity undertaken by mathematical communities to establish “rigor” (or: certainty, truth, “objectivity”).

different levels of rigour differ only about where they draw the line between the rigour of proof-analysis and the rigour of proof, i.e. about where criticism should stop and justification should start. — Imre Lakatos

Again, we can ask ourselves: are we building AI which has the kind of intimate acquaintance with shifting values, justification, and standards of rigor that Lakatos identifies as central to mathematical progress? If not, then AI might prove many true but uninteresting things.

Famously, Thomas Kuhn, in The Structure of Scientific Revolutions, gives this kind of observation a more general form. Sciences operate within paradigms: shared frameworks of vocabulary, methods, and standards that determine what counts as a meaningful question, a valid experiment, an acceptable explanation, an error. Within a paradigm, progress is measurable and cumulative. But paradigms change, and paradigm change is a different kind of activity — one driven not by new data alone but by the accumulating judgments of practitioners that the framework they have been working in no longer holds together, or no longer answers the questions they care about. The shift from Newtonian mechanics to general relativity, or from classical to molecular genetics, was not just a change in what scientists believed, but a change in what they thought was worth asking.

Consider the study of history. On a naive view, history is a paradigmatically objective enterprise — the goal is to document what literally happened, and there are objective facts about these happenings (in principle). Indeed, a large part of historical practice is about preserving documents and establishing the record of happenings. But to turn happenings into understandings, we need to begin asking normative questions: what happenings get to count as events? which events will be centered in our understandings? For example, when we write a history of the Industrial Revolution, do we organize it around inventors and machines, around the labor conditions of factory workers, around the colonial extraction that supplied the raw materials? When we write a history of the United States in the twentieth century, where does the narrative begin, and which Americans count as its subjects? Historical narratives have something like the structure of literary genres. This does not mean that history is “just storytelling”: some historical accounts are better evidenced and more attentive to complexity than others. But even the most rigorous history is shaped by judgments about what is worth telling and why. (And so are good stories, as we discussed earlier in the case of canons in literature).

A great deal of AI today is being built for “objective” tasks: proving theorems within established formal systems, retrieving and summarizing historical facts, running experiments inside existing research programs / ‘paradigms’. This work is valuable and scaling fast. But the normative work this section has been describing — choosing what is worth pursuing, deciding what counts as a satisfying or genuine answer / understanding, recognizing when a framework or standards needs revising — directs the objective work. For someone like Lakatos, this direction of the objective work is intimately tied to the actual doing of the objective work.

One name for this sense of direction is “taste”. Taste is what tells a mathematician that a result is interesting and not just true, a historian that a narrative is worth telling, a scientist that a research program is worth investing into for the long run. By most accounts, AI is not very good at capturing taste right now. I do not see any principled reason it has to stay that way. But even if/when it can capture our taste, arguably in many cases it is we humans who will be responsible for our taste and who will continually be changing our taste. So, to assist not only in the capturing but also the cultivation of our taste, we might want to build AI that helps us articulate the taste we already have, that surfaces alternatives we hadn’t considered, that pushes back when our taste is doing less work than we think: in other words, to make us masters at the “art of wanting”.

Hilbert and de Broglie were as much politicians as scientists: they reestablished order. — Deleuze and Guattari

Computer science is a restless infant and its progress depends as much on shifts in point of view as on the orderly development of our current concepts. — Alan J. Perlis

3. Towards intersubjective rationality

In Section 1, I suggested that “subjective” tasks like philosophy and writing are not standardless — they have rational structure in arguments, in canons, in standards of measurement that can be evaluated and revised. In Section 2, I suggested that “objective” tasks like mathematics and science involve ongoing normative work about judgments about what is worth pursuing, what counts as a satisfying answer, when a framework needs revising.

In both cases, we are looking at the same kind of activity: communities of people committing themselves to standards, reasoning carefully within those standards, arguing about whether the standards themselves should change. The standards are not purely “subjective” — they are not just opinions, and we can give reasons for or against them, and so they are rational. But they are not purely “objective” either — they were not handed down from outside the community, but build up by (and revisable by) it, and so they are intersubjective.

I will call this kind of activity intersubjective rationality.

What communities are doing, in this kind of activity, is binding themselves to norms: rules or standards that we take ourselves to be answerable to. For example: “don’t touch very hot things”, “thou shalt not kill”, “articulate your scientific experiments so they are reproducible”, “write to be clear rather than to sound smart”.

Norms are intersubjective: they are not facts about the external world that exist independently of us, but they are also not personal preferences that each individual gets to set for themselves. They are sustained by communities: we commit ourselves to them, identify when we or others fall short, and respond when failures are pointed out. We build institutions exist to sustain particular norms — legal systems for ethical and prudential ones, universities and journals for epistemic ones, art venues and editorial cultures for aesthetic ones. Those institutions are also where norms change. New ideologies enter the law and political scene, new methods become accepted in science, new aesthetic styles emerge in art. Binding ourselves to norms and revising them are part of the same ongoing practice of living and doing intellectual work together.

This is the process that we have been describing in our previous examples:

Philosophers commit to norms about what counts as a good argument, interrogate premises that other philosophers take for granted, and over time revise the standards by which philosophical work is evaluated.

Writers commit to canons — bodies of work that embody what their tradition takes literature to be about — and work within or against them.

Mathematicians commit to standards of proof, of rigor, and of mathematical interest, and the standards themselves shift through arguments about what kinds of research programs should be undertake in mathematics.

Historians commit to norms about evidence and narrative, and the norms get revised as new perspectives make claims about what history should center and whose experience should count.

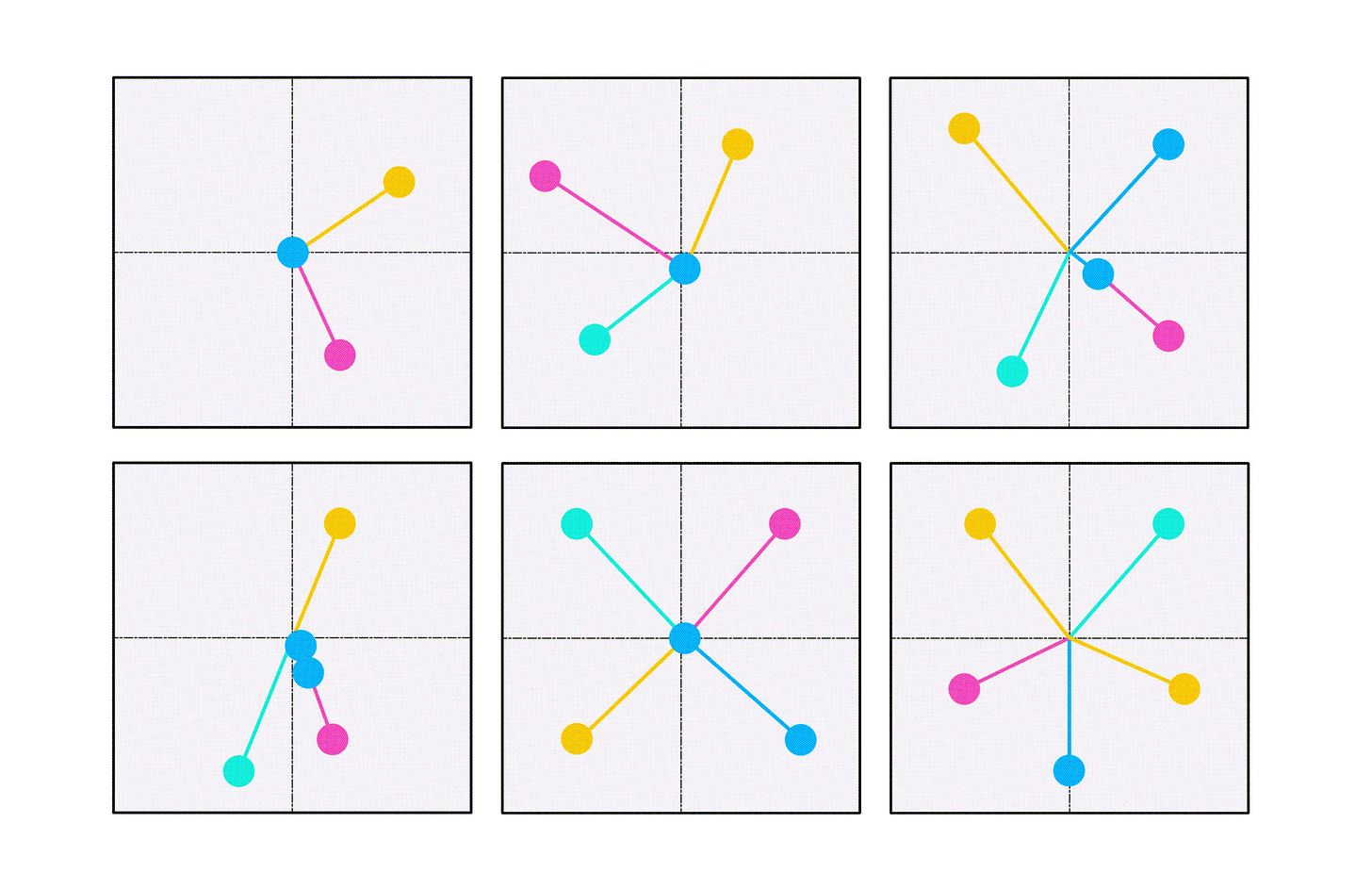

If we this picture of human intellectual work is right, then there are two ways AI can support it:

Working within norms. Given a set of established norms, help us produce work that lives up to them — prove theorems within a formal system, write in the style of a tradition, evaluate an argument against the standards of a discipline.

Working on norms. Help us see the norms we are operating under, make them legible and discussable, illuminate alternatives, and support the process by which communities revise their commitments.

Brian Cantwell Smith makes a related distinction between reckoning and judgment. Reckoning is the work of computing within a fixed framework; judgment is “a form of dispassionate deliberative thought, grounded in ethical commitment and responsible action, appropriate to the situation in which it is deployed.” As Melanie Mitchell put it in her tribute to Smith, “reckoning without judgment is a dangerous thing.” An AI that can reckon at scale but cannot judge — that can work within norms but cannot help us work on them — is the kind of AI we should be worried about building.

A lot of the failure modes of current human-AI interactions can arguably be diagnosed as failures of this second function (working on norms). An AI takes some norm as given — e.g. “what the user wants to hear” from RLHF or whatever mean/modal viewpoint in the training distribution — and operates confidently within it. (Why, it’s optimized the hell out of that norm, so of course it will/should be confident.) The user treats the AI’s as the product of judgment (i.e., the AI has done work both on norms and within norms) rather than reckoning/stipulation (i.e., the AI has worked within a norm that was just declared/stipulated rather than worked on).

Sycophancy: the AI flatters the user’s existing commitments and the user gradually loses the practice of examining them. Sycophancy is frustrating when we want the AI to advise us on work on norms rather than work within (our pre-existing, inferred) norms.

Disempowerment: the user comes to the AI-as-assistant-persona with a question (e.g. what should I do? what should I think) that delegates the user’s agency to the AI. In this case, we are abandoning our responsibility to participate in work on norms by assuming that the “AI knows best”.

So what does AI that does (or, helps us do) better work on norms look like?

Consider two visions:

Canon-conscious writing assistant AI. If we want writing assistant AIs to make our writing “better”, we need to ask what “better” means. If we don’t and just take the default/mean (e.g., whatever RLHF yields), we get flattened, convergent, often boring writing, as countless studies have demonstrated. Arguably, the problem is not (only? just?) that we need better default norms, but that we need people to engage with the norms (work on norms), since good writing is often good because it is conscious/intentional with what is trying to do. If AI models or interfaces were built around the notion of helping users identify with or challenge canons — which, recall, function as standards for what “better” writing should embody. There are so many different ways to articulate the objectives of a research paper, an essay, even something as mundane as an email or code documentation. Canon-conscious writing assistants would help us be more intentional about what these objectives should be, and craft writing that best exemplifies (or meaningfully challenges/subverts) objectives of interest.

“Multiverse” AI for open-ended questions. By open-ended questions, I mean philosophical, value-laden, or therapy-like questions where the right thing to do is not to answer immediately but to first establish what kind of answer the person is looking for and what norms it should be answerable to. If someone asks “should I be afraid of death,” the question of which norms we use to evaluate the answer is itself part of the work. What do you want out of an answer to this question? Do you want a ‘rational’ answer, or perhaps an answer that will guide you to live the most meaningful life? An AI that races to answer such questions without first surfacing this is doing the user a disservice. Instead, AI should visibly recognize and vary a “multiverse” norms at all kinds of granularity and show what kinds of outcomes they lead to. I recently made a first attempt at this idea with the “conceptual multiverse”, which you should check out!

We should also think about “slow AI” that meets our pace on work on norms.

I think that when we see these different domains of human inquiry as expressions of intersubjective rationality rather than as straightforwardly objective or subjective, we get a much more open and creative picture of AI can do for these domains. We do not need to be fixated on just how well an AI performs within a fixed set of standards. We can think about how well an AI helps us engage with the standards themselves: how well it helps us see them clearly, hold them deliberately, and revise them wisely.

Perhaps, at this point, you have a concern: why try to build AI to work on norms at all? Working on norms is part of what makes intellectual life worthwhile for humans. Are we not eroding the very thing we should be protecting: our capacity for judgement? Well, that will take another blogpost. My short answer is that I do not think we are as good at knowing/deciding what we want (i.e., working on norms) as we may think we are; if we think teachers, therapists, and friends can help us strengthen this capacity, why not add a widely-read and well-designed machine to this ensemble?